Axel Krieger’s idea for a new medical tool began in conversations with a friend, a doctor in the special forces. The medical professional would tell Krieger about his travels to remote areas, trying to treat patients who endured traumatic injuries — but all he had was inadequate equipment.

In these bleak circumstances, Krieger, a University of Maryland professor, and his friend began to think up a solution that could help others in similar circumstances: medical robots.

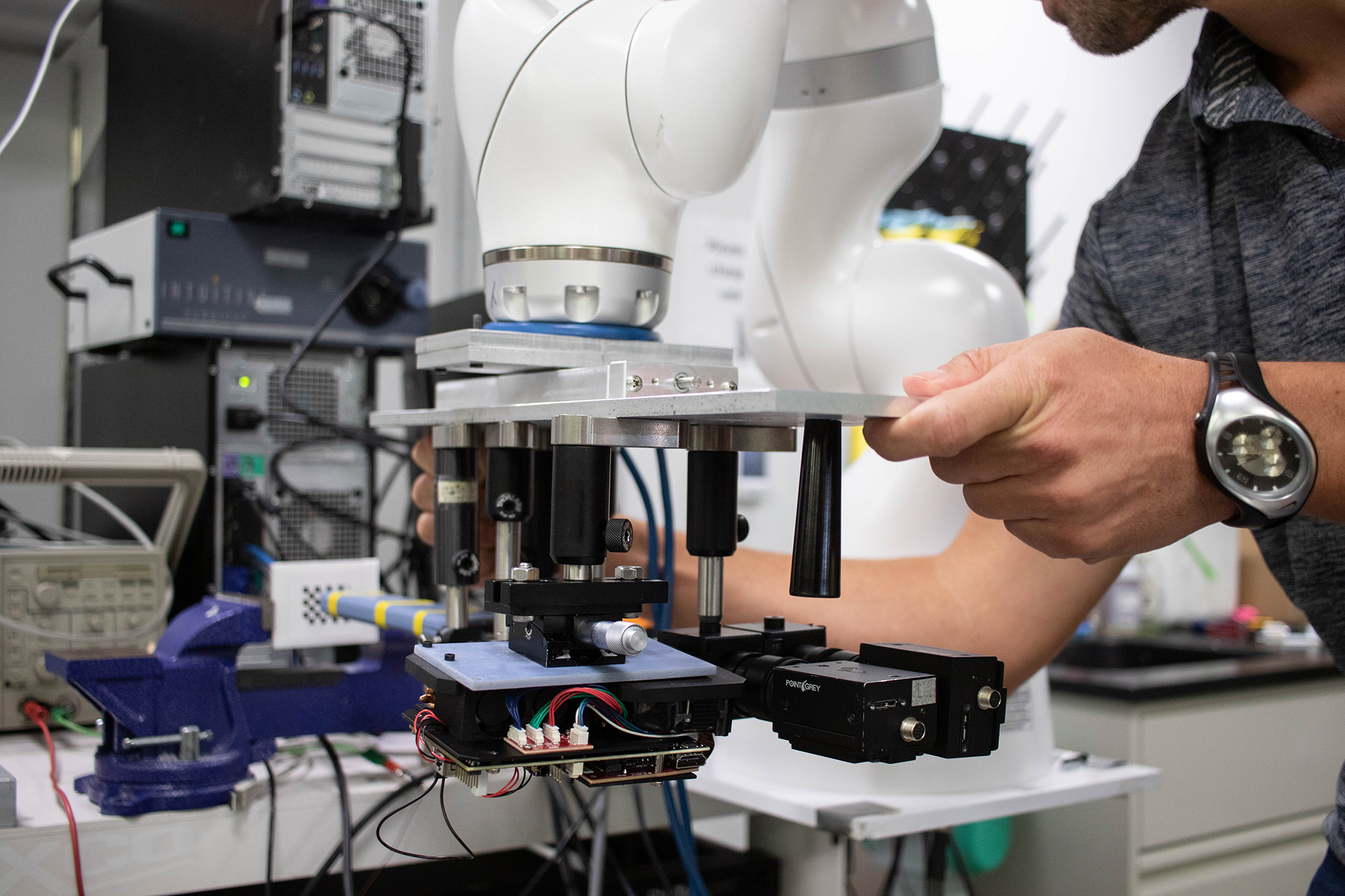

Starting in 2018, Krieger worked with a team of researchers to develop a robotic surgical assistant prototype designed to help diagnose a patient in an emergency vehicle after a traumatic injury. Now, more than 1 ½ years into the project, they’re continuing work on a robot that scans a patient for bleeding with a 2D and 3D camera and can perform an ultrasound controlled by a remote professional.

The robot was engineered to focus on abdominal injuries because unlike injuries to extremities, Krieger said, they can’t be treated with a tourniquet, — which squeezes a limb to stop blood flow — making them essential to tend to quickly.

“The first hour is so critical for [trauma] patients,” Krieger said. “If you don’t do definitive treatment to stop the bleed[ing] in that first hour … the risk of the trauma increases dramatically.”

[Read more: Sunny Day: A UMD researcher found the Indian version of Sesame Street helps kids]

The robotic assistant first scans the patient at a safe distance — so as not to aggravate any injuries — then zeroes in closer, creating a detailed map of the abdomen, he said.

After it collects measurements of the abdomen, it switches into a manipulation mode, where a remote surgeon or radiologist take control to perform an ultrasound and check for hemorrhages, Krieger said.

Thorsten Fleiter, an associate professor working at this university’s Shock Trauma Center, is focused on the imaging portion of the project. This component enables the robot to locate the source of bleeding, and is vital to determining the best form of treatment.

Lydia Zoghbi, a doctoral student studying mechanical engineering, is focusing on the machine learning that allows the robot to detect skin and wounds, which is essential for it to perform any of its functions.

The machine learning element is one of the main components that contribute to the robot’s autonomy, Zoghbi said. Her work teaches the robot to identify different regions of the abdomen.

She’s happy to leave the hands-on medical work to Fleiter, such as confirming the validity of the ultrasounds.

“We need his input to be able to validate … how realistic is our work compared to what actually happens in real life,” she said.

[Read more: New UMD computer science program aims to teach working professionals]

The prototype, Krieger said, has been tested on a phantom — a 3D-printed CT scan that’s a lifesize copy of the human body, comprised of gel that imitates the human body’s properties.

Although it’s not being tested on real people yet, researchers are working to improve the robot.

In future iterations, they’re equipping the robot with the ability to inject a foam into trauma patients’ bodies, providing internal pressure and stopping bleeding.

The next step, Krieger said, is to make the robot entirely autonomous. Then, it would not rely on an internet network connecting the emergency vehicle and hospital, and there would be no need for a medical professional to perform a remote ultrasound.

Emergency medical technicians, Krieger said, are trained to stabilize patients, but not to diagnose internal hemorrhages. EMTs are already responsible for a number of tasks, he said, including communicating with the hospital and finding the IV line.

“The idea is to use a machine that will do that and will make it independent from whoever is taking care of the patient,” Fleiter said. “You would always get the constant quality of results using the machinery.”

Krieger is excited about the surgical assistant’s ability to save lives.

“I love the idea of developing technology that can help people,” Krieger said. “I think that’s, you know, such a great luxury to work on things that can help.”